A Reminder about Polling

They're not very good at predicting elections. They're not designed to.

Happy Election Day.

National Review writer Dan McLaughlin posted a helpful reminder of how often most polls miss the mark. He posted on Twitter the polling averages for the 2018 gubernatorial race in Florida between then-GOP Congressman Ron DeSantis and Tallahassee’s Democratic Mayor Andrew Gillum.

Based on this, you might be forgiven for believing Gillum, once a rising star, would be elected.

The ones in the shaded part were conducted within the last week of the election.

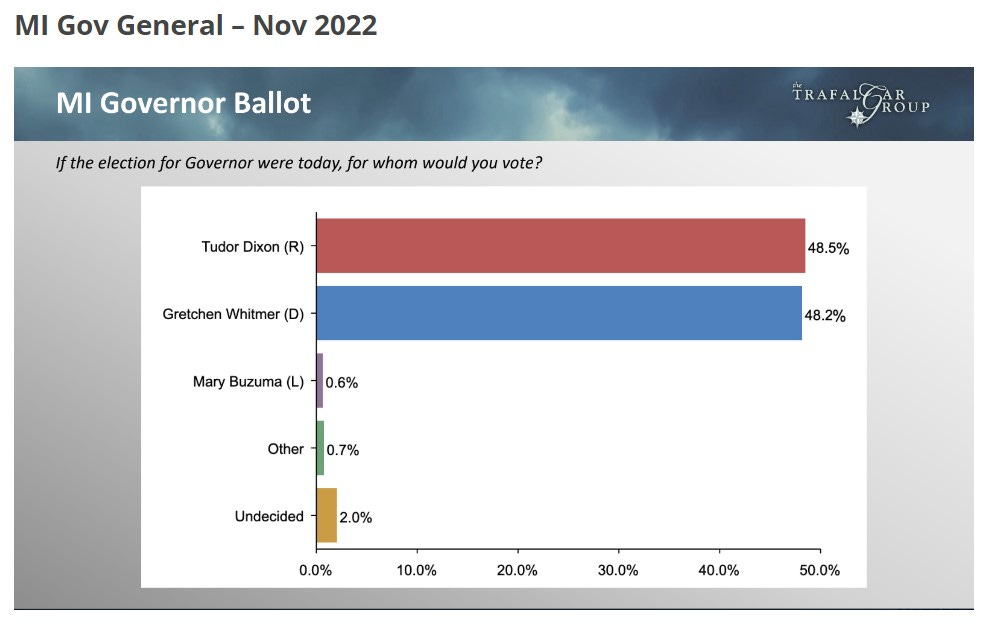

Only one survey, Trafalgar, got it right. Today, DeSantis is expected to cruise to a second term while Gillum is under federal indictment.

It interests me that many pundits and professional prognosticators have spent the past week positioning themselves - salvaging their credibility - to say they saw today’s election coming. They were singing a different tune two months ago. Most of them are based inside Washington’s beltway, with few excursions outside of it to see what’s really going on. You might be surprised how little actual campaign experience these pundits and prognosticators have.

That matters. They would be wise to accompany Salena Zito on her reporting from “the middle of somewhere” as America’s best political reporter and co-author with Brad Todd of the definitive book about the 2016 election, “The Great Revolt.” It remains a must-read.

As a result, they often rely on phone calls with political professionals and public opinion polling to make their judgments, along with studies of past elections and demographics.

The problem is that when you rely so heavily on public polling - which is typically less accurate than private polling conducted by partisan pollsters - you skew the analysis. Here are a few facts about political polling that are worth being reminded of.

Polls are not predictive. They are snapshots in time.

The refusal rate - the rate by which people refuse to talk to pollsters - is now approaching 99 percent. It’s been over 90 percent for a while. They have to make more calls (costs per completed call are escalating), making polling more expensive.

Pollsters have difficulty figuring out how to converse with voters who don’t answer their cell phones since so many have ditched their landlines. Those cell phones, like mine, often feature apps like “RoboKiller” that screen and divert to voice mail calls from unknown numbers. Pollsters don’t leave messages, they move on. They “weigh” their samples to reflect the demographics of the jurisdictions they’re polling.

As a result, sample sizes have shrunk in some cases (not all). Field dates - the dates pollsters are “in the field” are getting longer in some cases, which makes for a pretty blurry snapshot. Many polls are conducted over a weekend, which tends to skew Democratic. And Democrats are more likely to answer pollsters’ calls than Republicans. Pollsters are struggling to figure out how to get more Republicans, especially rural conservatives, to participate.

Robert Cahaly of Trafalgar reports uses a combination of shorter surveys, emails and text messages, and a mixture of online polling and live calls. It seems to work - his late polls have proven uncannily reflective of outcomes in recent years.

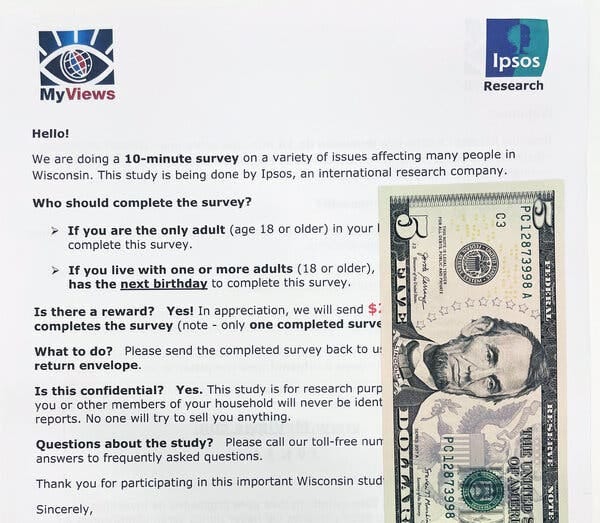

Nate Cohn, Chief Political Analyst at the New York Times, says his newspaper is trying a different tactic - paying people.

No one can be sure about outcomes on either front. The polls systematically underestimated Republicans in recent cycles, and pollsters have blamed something called nonresponse bias — the possibility that Donald J. Trump’s supporters are less likely to respond to surveys than demographically similar voters.

Nonresponse bias is a serious challenge for pollsters, who can’t hope to know anything about the people who won’t take their surveys. Without that information, pollsters don’t have a way to even account for their existence, much less devise a way to reach them.

So this fall, The New York Times tried something unusual: We paid people to take a poll.

In partnership with Ipsos, The Times paid respondents up to $25 dollars to take a political survey in Wisconsin, the battleground state where polls infamously erred in 2016 and 2020.

Beginning in early September, Ipsos mailed thousands of hard-to-miss priority mail and first-class envelopes to thousands of households across Wisconsin. The mailing contained a $5 bill and a letter promising an additional $20 if the respondent either took the survey online or returned the enclosed questionnaire in a provided return envelope.

At the same time, we fielded a traditional Times/Siena phone poll and an online probability-based panel survey on Ipsos/KnowledgePanel. This experimental design allows us to compare the results of the two groups of respondents, which, in turn, might offer a portrait of the kinds of people who might be less likely to be represented in a typical survey — for example, a moderate who isn’t a political junkie, someone who doesn’t really enjoy talking to strangers, and, yes, a Republican-leaning voter.

The data is still preliminary, and it will probably take at least six months, if not longer, before we can reach any final conclusions. But there is one immediate difference between the two groups, and that is in the polls’ response rates: Nearly 30 percent of households have responded to the survey so far — a figure dwarfing the 1.6 percent completion rate in the parallel Times/Siena poll.

One trend I’ve seen with pollsters late this election is an increasing reliance on “registered” versus “likely” voters. Since not everyone votes - turnout may reach 70 or 80 percent in the best election years - relying on registered voters is not as accurate and, again, will tend to skew Democratic (GOP turnout rates in most elections exceed that of Democrats).

Many of the poll disparities result from how the sample is constructed. Some polling samples feature a disproportionate number of Republicans or Democrats or oversample or overweight younger or older voters. The characteristics of those voting may not look exactly like the general demographics of an area. Voters over 65 typically turn out at higher rates than their share of the population. Pollsters do their best to estimate what the turnout population looks like. Sometimes they get it wrong.

It will be interesting to find out how well recent polling reflects election outcomes.

While I’ve consumed a massive share of public opinion surveys during my three decades of political campaign work - 35 US House and Senate campaigns in 25 states - I’m no polling expert. It’s a science, and a few of my readers, friends, and former political colleagues know this business far better than I do. I hope they’ll weigh in with comments and corrections to anything I’ve asserted here. I’m undoubtedly guilty of oversimplification and probably missed a thing or two.

I also prefer polling trends late in elections to any survey.

But most of my polling friends agree that their industry is challenged if not broken. Many believe that some polls are used malevolently to suppress the vote or are deliberately skewed to meet an objective, such as recruiting a candidate (I’ve seen it firsthand). I suspect that will be confirmed again as the election roll in tonight.